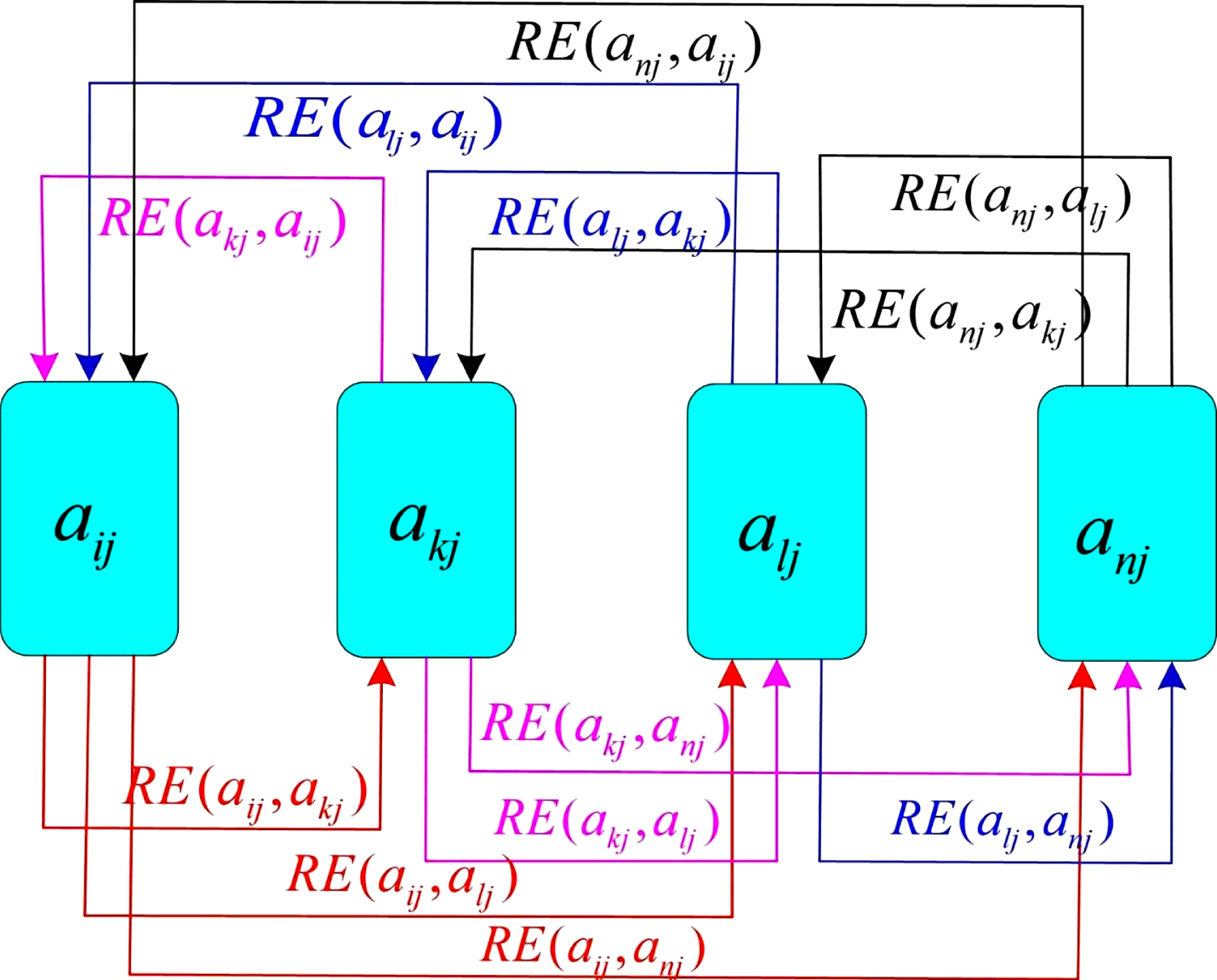

Now, we can derive the expanded term easily. ∂E/∂p i = ∂(- ∑)/∂p i Expanding the sum term Let’s calculate these derivatives seperately. We can apply chain rule to calculate the derivative. That’s why, we need to calculate the derivative of total error with respect to the each score. Cross entropy is applied to softmax applied probabilities and one hot encoded classes calculated second. Notice that we would apply softmax to calculated neural networks scores and probabilities first. PS: some sources might define the function as E = – ∑ c i . log(1 – p i)Ĭ refers to one hot encoded classes (or labels) whereas p refers to softmax applied probabilities. Things become more complex when error function is cross entropy.Į = – ∑ c i . If loss function were MSE, then its derivative would be easy (expected and predicted output). We need to know the derivative of loss function to back-propagate. Herein, cross entropy function correlate between probabilities and one hot encoded labels.Īpplying one hot encoding to probabilities Cross Entropy Error Function Finally, true labeled output would be predicted classification output. That’s why, softmax and one hot encoding would be applied respectively to neural networks output layer. Also, sum of outputs will always be equal to 1 when softmax is applied. After then, applying one hot encoding transforms outputs in binary form. entropyĪpplying softmax function normalizes outputs in scale of. We would apply some additional steps to transform continuous results to exact classification results. However, they do not have ability to produce exact outputs, they can only produce continuous results. On a more general level (the mechanics of log loss & accuracy for binary classification), you may find this answer useful.Neural networks produce multiple outputs in multi-class classification problems. Res/len(targets) = log_loss(targets, predictions) discarding the subtraction of (1-class_act) * np.log(1-class_pred). Indeed, reading more carefully the fast.ai wiki you have linked to, you'll see that the RHS of the equation holds only for binary classification (where always one of y and 1-y will be zero), which is not the case here - you have a 4-class multinomial classification. Your cross_entropy function seems to work fine.Ĭlearly I am misunderstanding how -y log (y_hat) is to be calculated. Log_loss(targets, predictions) = cross_entropy(predictions, targets)

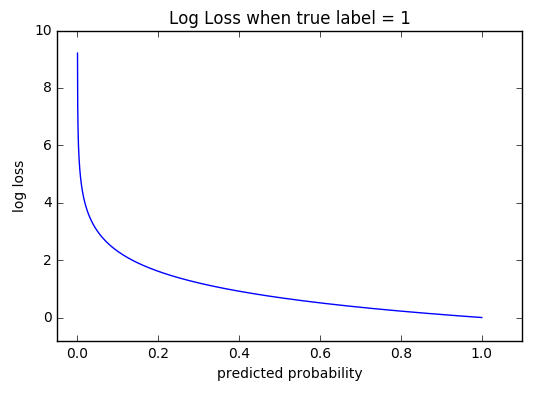

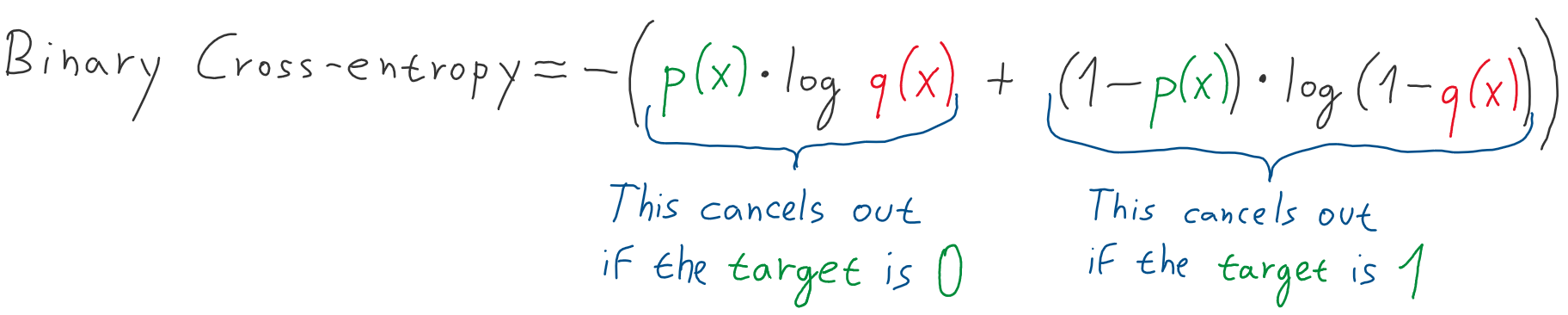

The results: cross_entropy(predictions, targets) I cannot reproduce the difference in the results you report in the first part (you also refer to an ans variable, which you do not seem to define, I guess it is x): import numpy as np Clearly I am misunderstanding how -y log (y_hat) is to be calculated. PS: I am also curious that -y log (y_hat) seems to me that it's same as - sigma(p_i * log( q_i)) then how come there is a -(1-y) log(1-y_hat) part. I have tried the same implementation with NumPy, but it also didn't work. Res += - class_act * np.log(class_pred) - (1-class_act) * np.log(1-class_pred)Īnd the output is 1.1549753967602232, which is not quite the same. res = 0įor act_row, pred_row in zip(targets, np.array(predictions)):įor class_act, class_pred in zip(act_row, pred_row):

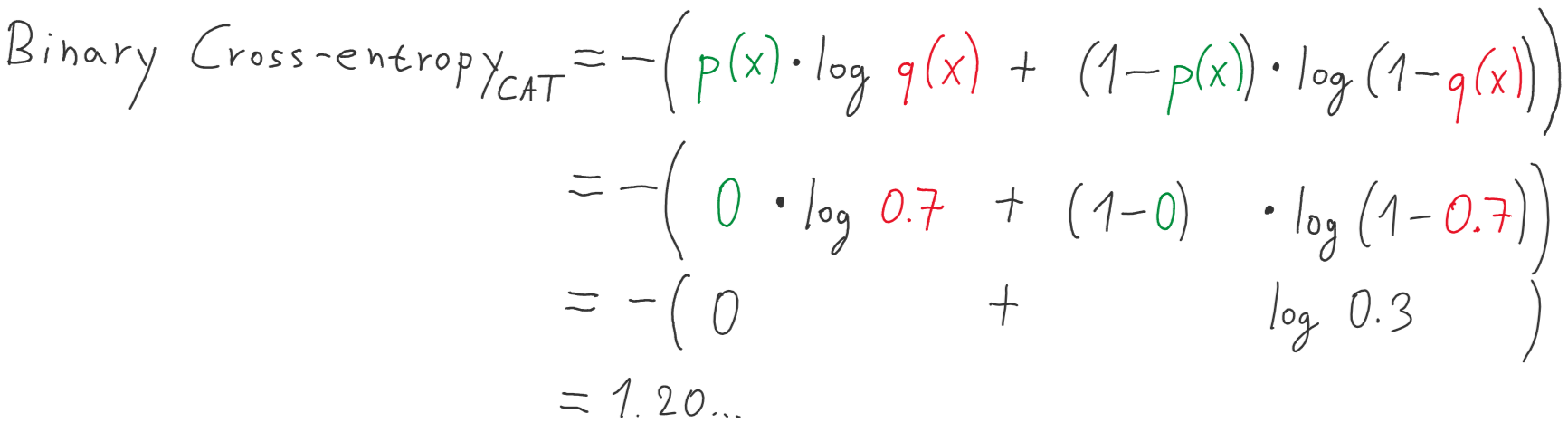

The second approach is based on the RHS part of the equation above. The above implementation is the middle part of the equation above. Print(log_loss(targets, predictions), 'our_answer:', ans) import numpy as npĬe = -np.sum(targets * np.log(predictions)) / N I was reading up on log-loss and cross-entropy, and it seems like there are 2 approaches for calculating it, based on the following equations.